10 Common LLM Data Annotation Mistakes (And How to Fix Them)

Large Language Models (LLMs) are rapidly transforming enterprise AI. Organizations are racing to integrate these powerful engines into their operations, hoping to automate complex tasks and improve customer experiences. However, building a capable AI model relies entirely on one critical foundation: high-quality LLM training data.

LLM data annotation is significantly more complex than traditional NLP labeling. Instead of simply identifying nouns or basic sentiments, annotators must evaluate complex reasoning, contextual nuance, and multi-turn conversations. Because of this added complexity, many companies face severe LLM training data issues caused by poor labeling processes.

When annotation goes wrong, the consequences are immediate. Models suffer from frequent hallucinations, ingrained bias, low overall accuracy, and poor reasoning capabilities.

This post highlights the most common AI data mistakes companies make. We will explain how to avoid these pitfalls and outline best practices for building scalable, high-quality data annotation pipelines.

What is LLM Data Annotation?

LLM data annotation is the process of labeling text, conversations, and responses to train large language models to understand instructions, context, and reasoning patterns.

Unlike older data categorization methods, modern AI engines require highly nuanced feedback to function correctly. Common examples of this work include:

- Instruction-response labeling

- Sentiment and intent tagging

- Hallucination detection

- RLHF (Reinforcement Learning from Human Feedback) preference ranking

- Conversation quality scoring

Building these LLM training datasets requires more than just basic reading comprehension. Successful annotation demands deep contextual understanding, subject matter domain expertise, consistent labeling guidelines, and multi-step human review.

Why Accurate LLM Training Data Matters

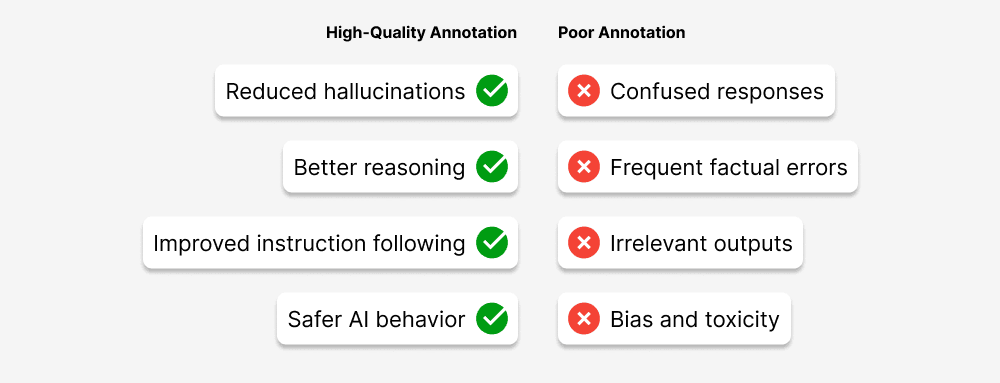

The output of an AI model is only as reliable as the data used to train it. High-quality annotation provides clear, accurate signals that teach the model how to respond appropriately. Poor annotation sends mixed signals, leading to erratic behavior.

Here is a quick breakdown of how annotation quality impacts model performance:

| High-Quality Annotation | Poor Annotation |

| Better reasoning | Confused responses |

| Reduced hallucinations | Frequent factual errors |

| Improved instruction following | Irrelevant outputs |

| Safer AI behavior | Bias and toxicity |

The core takeaway is simple: the intelligence and reliability of an LLM are directly tied to the quality of its annotated training data.

10 Common LLM Data Annotation Mistakes Companies Make

1. Using Annotators Without LLM Context Training

Many teams assume traditional data annotators can seamlessly transition to labeling LLM data. This is a major oversight. LLM annotation requires evaluating conversational nuance, complex instruction following, and logical reasoning. Without specialized LLM annotator training, workers provide inconsistent training signals, which ultimately degrades model performance.

2. Poorly Defined Annotation Guidelines

Vague instructions create one of the biggest LLM training data issues. When annotation guidelines lack clear examples or use inconsistent scoring scales, the resulting dataset becomes highly unreliable. Teams should establish detailed annotation playbooks that include specific edge-case examples and undergo continuous refinement.

3. Ignoring Context in Multi-Turn Conversations

LLMs are heavily trained on ongoing dialogue and contextual sequences. A common mistake is labeling individual messages independently, completely ignoring the surrounding context. This causes the model to fail at maintaining conversation history, resulting in chatbots that forget earlier user queries.

4. Lack of Quality Control Processes

Skipping multi-layer quality review is a dangerous shortcut. Companies often fail to use reviewer validation, regular sampling audits, or agreement metrics. To ensure accuracy, organizations must implement inter-annotator agreement tracking, gold standard tests, and automated quality checks.

5. Bias in Training Data

Bias is one of the most serious AI data mistakes a company can make. Training data can easily absorb geographic, cultural, gender, or language bias from annotators. This leads to unfair, toxic, or highly inaccurate AI outputs. Mitigation strategies require diverse annotator pools, routine bias audits, and carefully balanced datasets.

6. Over-Reliance on Synthetic Data

While synthetic data is helpful for scaling, relying on it too heavily introduces major risks. Machine-generated data often contains repetitive patterns, unrealistic conversational flows, and reduced linguistic diversity. The best practice is to combine real-world human datasets with targeted synthetic augmentation.

7. Not Labeling Edge Cases and Ambiguity

LLMs frequently struggle with complex, ambiguous scenarios like sarcasm, contradictory instructions, or incomplete user queries. If annotators ignore these edge cases, the model becomes easily confused during real-world application. Labeling ambiguous inputs carefully helps the AI learn how to ask clarifying questions or handle uncertainty.

8. Inconsistent Annotation Across Teams

Large datasets usually require distributed annotation teams. Without strong central management, these teams develop different interpretations of the rules, leading to varying skill levels and inconsistent standards. Centralized quality assurance systems and ongoing annotator calibration sessions are vital for keeping everyone aligned.

9. Ignoring Domain Expertise

Generic annotators cannot effectively label specialized content. Fields like finance, healthcare, legal analysis, and technical documentation require specific background knowledge. Using domain-specific annotation drastically improves the model’s factual accuracy and logical reasoning capability in specialized use cases.

10. Scaling Annotation Without Infrastructure

Companies frequently attempt to scale their data labeling operations too quickly. This results in fragmented workflows, poor dataset versioning, and severe limitations with basic annotation tools. Teams need structured annotation pipelines and professional data annotation platforms to manage high-volume labeling successfully.

How to Avoid These LLM Data Annotation Mistakes

Preventing these errors requires a proactive, structured approach. Here are actionable recommendations to keep your data pipelines healthy:

- Develop clear annotation guidelines: Create exhaustive playbooks with strong examples.

- Train annotators specifically for LLM tasks: Ensure they understand reasoning and context.

- Use multi-layer quality control: Do not rely on a single pass for data validation.

- Incorporate human-in-the-loop validation: Keep human experts involved in continuous model testing.

- Maintain dataset version control: Track changes to your data just like software code.

- Use domain experts when needed: Hire specialists for technical, medical, or legal data.

Because building this infrastructure internally is highly resource-intensive, enterprise AI teams increasingly partner with specialized providers to handle the heavy lifting.

How Macgence Helps Solve LLM Training Data Issues

Building flawless training data requires deep expertise and robust infrastructure. Macgence supports organizations by delivering enterprise-grade data solutions tailored for modern AI.

Macgence handles large-scale LLM data annotation, RLHF preference ranking, and multi-turn conversation labeling. For specialized models, we provide domain-specific dataset creation and multilingual training data, all backed by strict enterprise-quality assurance pipelines.

By partnering with Macgence, companies gain access to a highly trained annotator workforce, scalable data operations, and incredibly consistent dataset quality. This results in faster model development cycles and fewer post-launch errors.

With structured workflows and expert annotators, Macgence helps AI teams build reliable datasets that power high-performing large language models.

Future of LLM Data Annotation

The landscape of AI is shifting rapidly. Emerging trends are placing even more emphasis on human-driven feedback. Concepts like RLHF and preference learning are becoming standard practice. Additionally, AI-assisted annotation tools are speeding up basic tasks, while multimodal LLM datasets (combining text, image, and audio) are expanding the scope of what annotators must evaluate.

Safety and alignment labeling will also grow in importance as AI regulations tighten. Domain-specific training data will continue to be the main way enterprises build competitive moats. Ultimately, underlying data quality will remain the absolute biggest differentiator for commercial AI models.

Securing Your AI’s Future with Better Data

LLM success depends heavily on high-quality training data. Unfortunately, many companies struggle to reach their AI goals due to common AI data mistakes, ranging from vague guidelines to unmitigated bias. Overcoming these LLM training data issues means acknowledging that proper processes, highly skilled annotators, and multi-layered quality control are essential.

Organizations that invest in reliable LLM data annotation today will build more accurate, trustworthy, and scalable AI systems tomorrow.

FAQs

LLM data annotation involves labeling text, conversations, and responses so large language models can learn context, intent, reasoning, and safe behavior.

Common issues include inconsistent labeling, poor guidelines, bias in datasets, lack of quality control, and insufficient domain expertise.

High-quality annotation improves model accuracy, reduces hallucinations, and enables better reasoning and instruction following.

Companies improve quality by using trained annotators, strong guidelines, multi-layer QA systems, and specialized data annotation partners.

You Might Like

April 27, 2026

Powering Robotics AI With Activity Recognition

Robotics automation is undergoing a massive transformation. We are moving away from simple, rule-based machines and entering an era of AI-driven perception. Robots no longer just perform repetitive tasks; they observe, interpret, and react to human behavior in real time. Understanding human activities is especially critical in complex physical spaces like stores and factories. This […]

April 25, 2026

Building a High-Quality Robot Perception Dataset

Robot perception serves as the backbone of embodied AI. Without the ability to accurately see, hear, and feel their surroundings, machines cannot interact safely with the physical environment. A robot perception dataset provides the essential sensory inputs—like vision, depth, and tactile feedback—that train these systems to understand the world around them. When developers rely on […]

April 24, 2026

Advanced Robotics Data Types: From Trajectories to 3D Hand Meshes

The field of artificial intelligence is experiencing a massive shift. We are moving away from simple labeled datasets toward complex, multimodal robotics data. Early AI models relied heavily on static images and text, but embodied AI and modern robot learning require something much more robust. To interact with the physical world, robots need high-fidelity data […]

Previous Blog

Previous Blog