- What Is Data Labeling (and Why It Matters in Production AI)

- Common Data Labeling Quality Issues in Real Projects

- The Hidden Costs of Poorly Labeled Data

- Real-World Scenarios of Poor Labeling Impact

- How to Detect Data Labeling Quality Issues Early

- Best Practices to Avoid Poor Training Data Impact

- Why Data Labeling Quality Is a Competitive Advantage

- Fix the Labels Before You Fix the Model

The Hidden Cost of Poorly Labeled Data in Production AI Systems

When an AI system fails in production, the immediate instinct is to blame the model architecture. Teams scramble to tweak hyperparameters, add layers, or switch algorithms entirely. But more often than not, the culprit isn’t the code—it’s the data used to teach it.

While companies pour resources into hiring top-tier data scientists and acquiring expensive compute power, the foundation of machine learning—data labeling—is frequently treated as an afterthought. This oversight creates “hidden costs” that only surface once an AI system is live and interacting with real users. These aren’t just technical glitches; they manifest as wrong predictions, user distrust, and significant business losses.

If you don’t address data labeling quality issues early, they silently undermine your entire project. From autonomous vehicles misinterpreting stop signs to chatbots giving offensive answers, AI model errors often trace back to the initial annotation phase. This article explores why poor labels break systems and how you can prevent these costly mistakes.

What Is Data Labeling (and Why It Matters in Production AI)

At its core, data labeling is the process of adding context to raw data so a machine learning model can learn from it. This might look like drawing bounding boxes around cars in a video frame, transcribing audio files into text, or tagging customer reviews as “positive” or “negative.”

In a training environment, labels act as the ground truth. They are the textbook the model studies to understand the world. However, there is a distinct difference between training and production environments. In production, the model faces the chaotic, unlabeled real world. If the “textbook” it studied (the training data) contained errors, the model will confidently replicate those errors in the wild.

It is crucial to remember that models do not learn reality; they learn the labels you give them. If a dog is labeled as a cat 100 times, the model will learn that the animal’s barking is a cat. This disconnect is the primary driver of poor training data impact. If the input is flawed, the output will inevitably be flawed, regardless of how sophisticated the algorithm is.

Common Data Labeling Quality Issues in Real Projects

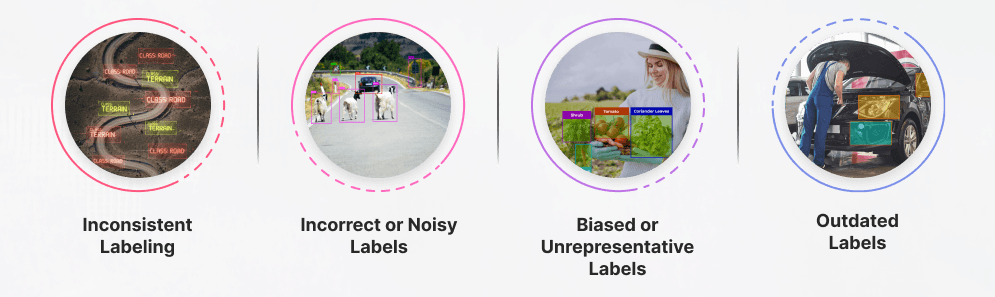

To fix labeling problems, you first need to identify them. Quality issues usually fall into four specific categories that plague real-world AI projects.

Inconsistent Labeling

Inconsistency occurs when different annotators—or even the same annotator at different times—interpret labeling rules differently. For example, in a geospatial project, one person might label a dirt path as a “road,” while another labels it “terrain.” Without strict guidelines, the model receives conflicting instructions, leading to a confused system that struggles to generalize.

Incorrect or Noisy Labels

These are straightforward errors: a human clicks the wrong button, a bounding box is too loose, or an automated pre-labeling script fails. This “noise” spreads rapidly into the training data. If a dataset has a 10% error rate, the model is essentially being taught to be wrong 10% of the time.

Biased or Unrepresentative Labels

This issue arises when the labeled data doesn’t reflect the full reality the model will encounter. It might include missing edge cases (like a self-driving car dataset with no images of snow) or a skewed class distribution (a fraud detection dataset with 99% legitimate transactions and only 1% fraud). The model learns to favor the majority class, ignoring the critical minority.

Outdated Labels

Data evolves, but labels often stay static. This concept, known as data drift, is common in dynamic fields. For instance, slang on social media changes rapidly. If a sentiment analysis model is trained on internet slang from 2015, it will fail to understand comments written in 2024. These data labeling quality issues render the model obsolete before it is even deployed.

The Hidden Costs of Poorly Labeled Data

When bad data enters the pipeline, the costs extend far beyond the engineering team. They ripple through the entire organization, affecting technical performance, financial stability, and brand reputation.

Technical Cost: Lower Model Accuracy

The most direct consequence is a drop in performance. When a model trains on inconsistent or incorrect labels, it cannot converge on an optimal solution. It learns patterns that don’t exist or misses patterns that do. This leads to persistent AI model errors that are difficult to debug, even though the code itself is functionally correct. The engineering team can spend weeks searching for a bug that doesn’t exist in the software.

Business Cost: Bad Decisions at Scale

AI is designed to automate decision-making. When those decisions are based on poor training data impact, the errors scale instantly. A fraud detection system might flag thousands of legitimate customers as criminals, freezing their accounts. A recommendation engine might suggest irrelevant products, driving down conversion rates. A search algorithm might fail to surface the right documents. These aren’t just bugs; they are operational failures that directly harm the bottom line.

Financial Cost: Re-training and Re-labeling

Fixing a model broken by bad data is expensive. You cannot simply patch the code. You have to audit the dataset, pay for re-labeling (which often costs more than the initial labeling), and then re-train the model. This consumes massive amounts of expensive GPU compute time and delays the product roadmap, burning through the budget while the competition moves ahead.

Brand & Trust Cost

User trust is hard to gain and easy to lose. If an AI product frustrates users—like a voice assistant that constantly misunderstands commands or a medical diagnostic tool that yields false positives—users will abandon it. In sensitive industries like finance or healthcare, these failures can also attract regulatory scrutiny and fines.

Real-World Scenarios of Poor Labeling Impact

To understand the severity of these issues, it helps to look at hypothetical scenarios across different industries.

Example 1: Computer Vision in Manufacturing

A factory deploys a computer vision system to detect defects on an assembly line. However, the training data had loose bounding boxes around the defects. As a result, the model learns to associate the background conveyor belt with the defect rather than the crack in the product. The system starts rejecting perfectly good products, causing unnecessary waste and production delays.

Example 2: NLP Sentiment Analysis

A retail company uses a sentiment classifier to route customer support tickets. The annotators were inconsistent about labeling sarcasm. Some labeled sarcastic reviews as “positive” because of the words used (e.g., “Great job breaking my package”), while others labeled them “negative.” The confusion causes the classifier to route angry customers to the wrong support queue, exacerbating their frustration.

Example 3: Healthcare AI

In a medical imaging project, a small percentage of X-rays were mislabeled regarding the presence of a fracture due to low-resolution images provided to annotators. This seemingly minor error rate leads to the model missing actual fractures in a clinical setting, posing a severe risk to patient health and exposing the hospital to liability.

How to Detect Data Labeling Quality Issues Early

Waiting for user complaints is the worst way to find out your data is bad. You need proactive measures to catch issues before training begins.

Start with label consistency checks. If you have multiple annotators, use “inter-annotator agreement” metrics (like Cohen’s kappa) to measure how often they agree on the same data point. Low agreement usually points to ambiguous guidelines.

Implement random sampling and audits throughout the labeling process. Don’t just check the first batch; check batches in the middle and end of the project to ensure quality hasn’t degraded due to annotator fatigue.

Finally, monitor prediction confidence in production. If the model is consistently unsure (low confidence scores) about specific types of inputs, pull that data and review how similar examples were labeled in the training set. This creates a feedback loop that helps identify data labeling quality issues quickly.

Best Practices to Avoid Poor Training Data Impact

Building a robust AI system requires a robust data strategy. Here are four best practices to insulate your project from data-related failures.

Clear Labeling Guidelines

Your labeling instructions should be treated like a legal contract. They must be explicit, detailed, and visual. Define edge cases clearly. If a car is 50% occluded by a tree, should it be labeled? Provide “gold standard” examples of correct and incorrect labels so annotators have a reference point.

Human-in-the-Loop Review

Automation is great, but human oversight is mandatory. Implement a review hierarchy where senior annotators or domain experts validate the work of junior annotators. Spot checks should be a regular part of the workflow, not an afterthought.

Iterative Label Improvement

Data labeling is not a “one and done” task. As your model improves, it will uncover edge cases you didn’t anticipate. Use these insights to refine your labeling guidelines and update your dataset. This cycle of continuous improvement prevents stagnation.

Invest in Quality Over Volume

A common misconception is that more data is always better. In reality, a smaller, high-quality dataset often outperforms a massive, noisy one. Prioritize getting 10,000 perfectly labeled examples over 100,000 messy ones. This approach reduces poor training data impact and makes debugging significantly easier.

Why Data Labeling Quality Is a Competitive Advantage

Companies that view labeling as low-level grunt work are destined to struggle. Conversely, organizations that treat labeling as critical infrastructure gain a massive competitive edge.

High-quality labels allow you to build better models faster. You spend less time troubleshooting AI model errors and more time innovating features. Furthermore, trusted data allows you to scale more safely. When you know your ground truth is solid, you can deploy with confidence. Labeling quality isn’t just a technical requirement; it is a strategic asset.

Fix the Labels Before You Fix the Model

If your AI project is underperforming, resist the urge to overhaul the architecture immediately. Look at the data first. Most AI failures stem from the information fed into the system, not the system itself.

The hidden costs of bad data—wasted budget, skewed decisions, and damaged reputation—are too high to ignore. By prioritizing data labeling quality issues, you ensure the long-term stability and success of your AI initiatives. Rethink how you treat your training data. Audit your pipelines, refine your guidelines, and remember: understanding labeling quality is the first step toward building reliable AI systems.

You Might Like

April 8, 2026

Why Data is the Real Bottleneck in Embodied AI Training

AI is moving off our screens and into the physical world. For years, artificial intelligence lived exclusively on servers and smartphones. Now, it is driving autonomous systems, powering delivery robots, and animating humanoids. This transition from software-only models to physical agents represents a massive shift in how machines interact with human environments. While there is […]

April 7, 2026

Why Synthetic Speech Data Isn’t Enough for Production AI

The voice AI market is experiencing explosive growth. From virtual assistants and call automation systems to interactive voice bots, companies are racing to build intelligent audio tools. To meet the demand for training information, developers are increasingly turning to synthetic speech data as a fast, highly scalable solution. Because of this rapid adoption, a common […]

April 6, 2026

Where to Buy High-Quality Speech Datasets for AI Training?

The demand for intelligent voice assistants, call analytics software, and multilingual AI models is growing rapidly. Developers are rushing to build smarter tools that understand human nuances. But the biggest challenge engineers face isn’t writing better algorithms. The main hurdle is finding reliable, scalable, and high-quality audio collections to train their models effectively. Training a […]