- What is Embodied AI Training?

- Why Physical AI is Gaining Momentum?

- The Critical Role of Data in Robotics

- Why Data is the Ultimate Bottleneck?

- Synthetic vs Real-World Data: The Trade-off

- How Companies Are Solving the Data Problem?

- Best Practices for Building Robust Datasets

- The Future of Physical Artificial Intelligence

- Rethinking the Path to Autonomous Systems

- FAQs

Why Data is the Real Bottleneck in Embodied AI Training

AI is moving off our screens and into the physical world. For years, artificial intelligence lived exclusively on servers and smartphones. Now, it is driving autonomous systems, powering delivery robots, and animating humanoids. This transition from software-only models to physical agents represents a massive shift in how machines interact with human environments.

While there is immense hype surrounding these physical systems, the reality of building them reveals a significant hurdle. Models are advancing rapidly, but the true limitation lies elsewhere. The biggest bottleneck in robotics isn’t the algorithm. It is the data. Mastering embodied AI training requires a completely new approach to how we collect and manage information.

What is Embodied AI Training?

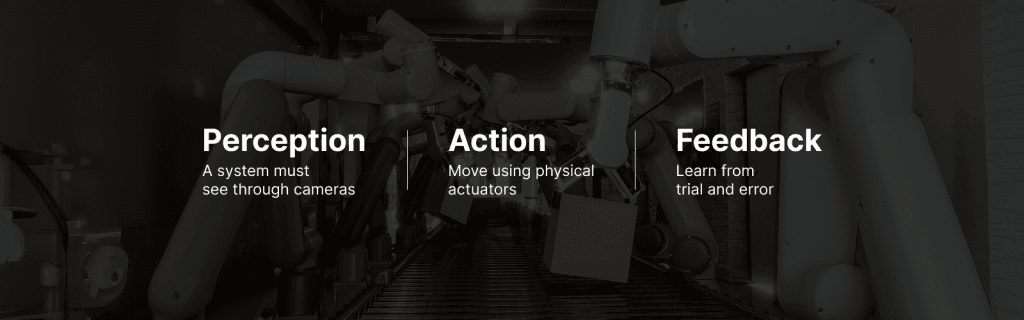

Training AI systems to interact with and learn from the physical world is fundamentally different from teaching a chatbot to generate text. Embodied AI training involves three core components: perception, action, and feedback loops. A system must see through cameras, move using physical actuators, and learn from trial and error in real time.

Examples of this technology include humanoid robots navigating factory floors, autonomous delivery rovers crossing city streets, and industrial robotic arms assembling delicate components. Traditional AI training relies on static, historical datasets. In contrast, physical agents must navigate dynamic, unpredictable environments, making the training process exponentially more complex.

Why Physical AI is Gaining Momentum?

Recent breakthroughs are pushing physical AI into the spotlight. Robotics hardware has become cheaper and more capable. Simultaneously, foundation models, such as multimodal large language models and vision-language models, give machines a much better understanding of their surroundings.

Industry demand is surging across manufacturing automation, healthcare robotics, and smart environments. Major tech players are investing heavily in projects like Tesla Optimus, Figure AI, and other ambitious robotics startups. Despite these massive leaps forward in hardware and algorithmic capability, wide-scale real-world deployment remains highly limited.

The Critical Role of Data in Robotics

People often say data is the new oil. In robotics, extracting that oil is incredibly difficult. Developing a capable robot requires multiple streams of complex information. Engineers need visual data from egocentric cameras, sensor readings from LiDAR and depth sensors, motion trajectories, and human demonstration records.

Building comprehensive multimodal robotics datasets is essential to teach a machine how to interpret and react to its surroundings. Diversity and realism matter immensely. A robot trained in a pristine lab will quickly fail in a messy, unpredictable kitchen. This necessitates continuous learning and feedback loops rather than a one-time training session.

Why Data is the Ultimate Bottleneck?

Creating algorithms is getting easier, but feeding them the right information remains a monumental challenge. The core bottlenecks can be broken down into several specific areas.

Data Collection is Expensive

Gathering real-world robot training data requires specialized hardware setups and highly controlled environments. Recording a robot performing a task takes immense time, physical effort, and financial investment.

Lack of Standardized Datasets

Computer vision and natural language processing benefited heavily from massive, open-source datasets like ImageNet. Robotics datasets, however, are highly fragmented, closely guarded by private companies, and very domain-specific.

Annotation Complexity

Labeling video frames for a self-driving car or a robotic arm is tedious and difficult. Annotators must label specific actions, object interactions, and complex temporal sequences. This often requires strict domain expertise to ensure accuracy.

Edge Cases and Real-World Variability

Lighting changes, unexpected obstacles, and human unpredictability routinely ruin perfectly good models. A simulation cannot easily replicate the exact physical properties of a wet floor, a sudden glare, or a moving crowd.

Scalability Challenges

Scaling real-world data collection is physically constrained. You cannot simply scrape the internet for physical interactions. Every new piece of data requires real-world movement and recording.

Synthetic vs Real-World Data: The Trade-off

To bypass physical collection limits, many engineers turn to synthetic data generated in computer simulations. This approach is highly scalable and highly cost-effective. You can generate millions of scenarios overnight.

However, synthetic data suffers from the “domain gap.” Simulations often feature unrealistic physics or fail to capture the messy reality of the physical world. That is why high-quality real-world robot training data is still critical. The most successful engineering teams use a hybrid approach, combining the massive scale of simulation with the ground truth of real-world captures.

How Companies Are Solving the Data Problem?

Organizations are building robust data collection pipelines using human-in-the-loop systems. Teleoperation and imitation learning allow human operators to physically guide robots through complex tasks, recording the exact movements and sensor readings to train the model.

Crowdsourced data collection is also gaining traction for simpler interactions. Furthermore, many teams now rely on specialized data providers to source and structure high-quality multimodal robotics datasets. This ensures their models have the precise, diverse information needed to generalize across different physical environments.

Best Practices for Building Robust Datasets

Ensure your data strategy includes multimodal coverage, capturing vision, sound, and touch simultaneously. Prioritize real-world diversity to expose the model to various lighting conditions, object placements, and edge cases.

Invest heavily in high-quality annotation to prevent garbage-in, garbage-out scenarios. Finally, treat dataset creation as an ongoing process. Continuous updates and strategic partnerships with specialized data providers will keep your models sharp and adaptable to new environments.

The Future of Physical Artificial Intelligence

We are witnessing the rapid convergence of artificial intelligence, advanced robotics, and scalable data infrastructure. This integration will inevitably lead to the rise of general-purpose robots capable of performing multiple distinct tasks across various industries. As algorithms commoditize, data-centric AI will become the dominant paradigm. The companies that build the best data collection pipelines today will dominate the robotics industry tomorrow.

Rethinking the Path to Autonomous Systems

Algorithms are improving at breakneck speed, but information gathering remains the true bottleneck. Without high-quality, diverse input, even the most advanced foundational models will fail in the physical world. Mastering embodied AI training is the key to unlocking the next generation of autonomous systems. Organizations must rethink how they collect and manage real-world robotics data if they wish to deploy functional, safe, and effective robots into our daily lives.

FAQs

Ans: – It is the process of teaching artificial intelligence systems to perceive, interact with, and learn from the physical world using sensors and physical actuators.

Ans: – Data provides the necessary foundation for how a robot understands its environment. Without diverse and accurate data, a physical agent cannot safely navigate spaces or perform physical tasks.

Ans: – These are large collections of data that include multiple types of sensory input, such as video, audio, LiDAR, and tactile feedback, helping robots build a complete picture of their surroundings.

Ans: – It requires expensive hardware, significant physical time, controlled environments, and complex annotation processes that cannot be easily automated by software alone.

Ans: – No. While synthetic data is scalable and cost-effective, it often lacks the realistic physics and unpredictable edge cases found in the real world. A hybrid approach combining both is best.

Ans: – Companies can use teleoperation, human-in-the-loop systems, and partner with specialized data providers to build continuous, high-quality data pipelines.

You Might Like

April 29, 2026

Fine-Grained Data: The Key to Precision Robotics

The field of robotics has officially moved past simple, repetitive automation. Modern robots are now expected to execute highly complex tasks that require exact precision and adaptability. Whether a robotic arm is assisting in a surgical procedure, assembling microscopic electronic components, or preparing a meal in a kitchen, these real-world tasks demand extraordinary fine motor […]

April 27, 2026

Powering Robotics AI With Activity Recognition

Robotics automation is undergoing a massive transformation. We are moving away from simple, rule-based machines and entering an era of AI-driven perception. Robots no longer just perform repetitive tasks; they observe, interpret, and react to human behavior in real time. Understanding human activities is especially critical in complex physical spaces like stores and factories. This […]

April 25, 2026

Building a High-Quality Robot Perception Dataset

Robot perception serves as the backbone of embodied AI. Without the ability to accurately see, hear, and feel their surroundings, machines cannot interact safely with the physical environment. A robot perception dataset provides the essential sensory inputs—like vision, depth, and tactile feedback—that train these systems to understand the world around them. When developers rely on […]

Previous Blog

Previous Blog