- What is Fine-Grained Manipulation Data?

- Why Cooking Data is the Gold Standard for Manipulation Learning

- The Role of Manipulation Trajectory Data in Robotics

- Key Components of High-Quality Fine-Grained Manipulation Data

- Challenges in Collecting Fine-Grained Cooking Manipulation Data

- Applications of Fine-Grained Manipulation Data in Robotics

- How Fine-Grained Data Improves Robot Learning

- Best Practices for Building Manipulation Datasets

- Why Businesses are Outsourcing Robotics Data Collection

- Future Trends in Manipulation Data for Robotics

- Empowering the Next Generation of Precision Machines

- FAQs

Fine-Grained Data: The Key to Precision Robotics

The field of robotics has officially moved past simple, repetitive automation. Modern robots are now expected to execute highly complex tasks that require exact precision and adaptability. Whether a robotic arm is assisting in a surgical procedure, assembling microscopic electronic components, or preparing a meal in a kitchen, these real-world tasks demand extraordinary fine motor control.

To achieve this level of physical intelligence, developers need specialized training information. Fine-grained manipulation data acts as the missing layer that bridges the gap between clumsy mechanical movements and smooth, human-like dexterity. High-quality datasets teach robots exactly how to interact with their environment at a micro-level.

Acquiring this specific information can be highly complex. Data providers like Macgence specialize in supplying researchers and businesses with the precise robotics data needed to train the next generation of intelligent machines.

What is Fine-Grained Manipulation Data?

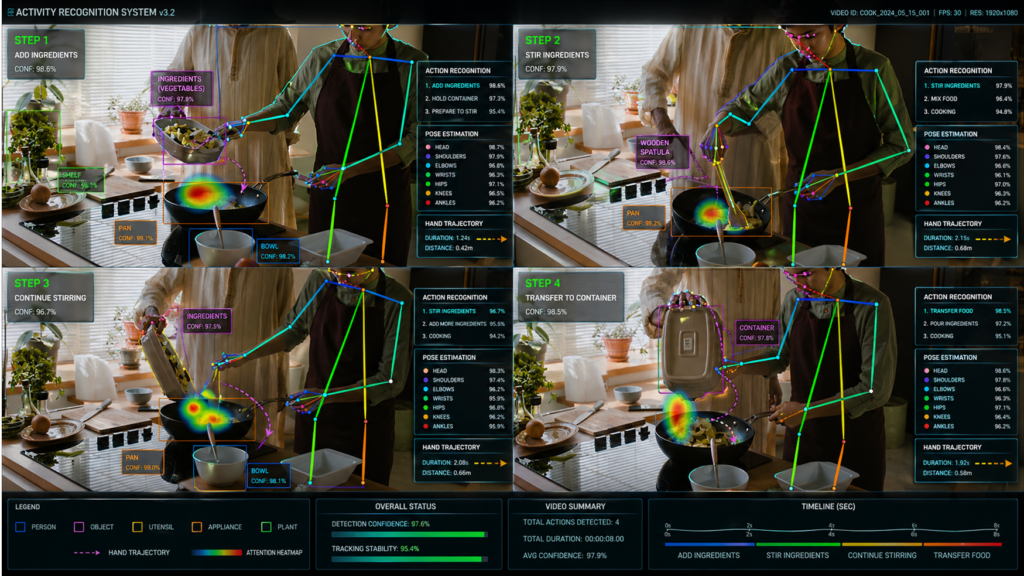

Fine-grained manipulation data consists of high-resolution, step-by-step recordings of physical interactions. This data captures the minute details of how a hand or robotic gripper interacts with an object. It includes tracking individual finger movements, the mechanics of object interactions, and the specific force and timing applied during a task.

Consider a common household chore like cooking a meal. Preparing food involves a complex sequence of cutting, stirring, and pouring. Capturing the exact mechanics of these tasks requires Fine-grained Cooking Manipulation Data, a specialized subset of information that records the precise physical nuances of culinary tasks.

Why Cooking Data is the Gold Standard for Manipulation Learning

Cooking serves as one of the most effective benchmarks for teaching robots manipulation skills. Preparing food is a complex, sequential, and multimodal task. It requires a machine to demonstrate precision, adaptability, and context awareness all at once.

When a robot learns to cut vegetables, it must calculate the correct knife angle and apply the appropriate downward force. Pouring liquids requires precise flow control to prevent spills. Mixing ingredients demands a specific movement trajectory and rhythmic timing. Because these actions are so intricate and varied, robotics researchers frequently use cooking as a foundational benchmark dataset to train highly capable robotic systems.

The Role of Manipulation Trajectory Data in Robotics

To execute complex physical tasks, robots rely on manipulation trajectory data. This refers to the exact continuous path a robotic arm or hand takes through space over a specific period.

Key components of this data include:

- Spatial coordinates: Mapping 3D movement accurately.

- Temporal sequences: Recording the exact timeline of actions.

- Velocity and acceleration: Measuring how fast a movement occurs and how quickly it changes speed.

By analyzing manipulation trajectory data, developers can improve robotic motion planning, imitation learning, and reinforcement learning. For example, if a robotic arm needs to replicate human cooking actions, it must follow an exact trajectory to successfully move a spatula from a resting position to a frying pan without colliding with other objects.

Key Components of High-Quality Fine-Grained Manipulation Data

Building effective datasets requires capturing several layers of high-quality information simultaneously.

Multimodal Inputs

A robust dataset combines vision (RGB and depth cameras) with motion capture and sensor data, such as force and tactile feedback.

High Annotation Precision

Data is only useful if it is labeled correctly. High-quality datasets require frame-level labeling and meticulous tagging of object-hand interactions.

Temporal Consistency

The data must maintain sequential coherence across multiple tasks. It also needs to capture real-world variability by including different environments, tools, and human users to ensure the robot can adapt to new situations.

Challenges in Collecting Fine-Grained Cooking Manipulation Data

Gathering this level of detailed information presents several distinct challenges. The sheer complexity of the data is daunting, as recording micro-movements often results in occlusions where hands block the camera’s view of the object.

Annotation is another major hurdle. Frame-by-frame labeling of subtle finger movements is incredibly labor-intensive. Additionally, synchronizing various sensors—like matching video feeds with tactile sensor readouts—requires advanced technical infrastructure. These factors contribute to the high cost of data collection and a general lack of standardized datasets in the industry.

Applications of Fine-Grained Manipulation Data in Robotics

Detailed manipulation datasets are driving advancements across multiple industries:

- Household robots: Powering robotic cooking assistants and cleaning devices.

- Industrial automation: Enabling precision assembly for delicate electronics and machinery.

- Healthcare robotics: Providing the foundation for surgical assistance systems that require flawless precision.

- Service robots: Assisting with food preparation and hospitality services.

- Humanoid robots: Helping bipedal robots learn human-like dexterity for general-purpose tasks.

How Fine-Grained Data Improves Robot Learning

Feeding high-quality data into AI models yields significant improvements in robotic performance. It leads to better outcomes in imitation learning, allowing machines to mimic human actions more accurately.

This data also drastically reduces the sim-to-real gap, ensuring that a robot trained in a virtual simulation performs reliably in the physical world. Furthermore, it improves a robot’s ability to generalize across tasks, enhances grasping and object handling capabilities, and accelerates the overall training process for embodied AI systems.

Best Practices for Building Manipulation Datasets

To create effective datasets, developers must follow rigorous collection standards. Utilizing both egocentric (first-person) and third-person camera views provides a comprehensive visual understanding of a task. Combining visual information with manipulation trajectory data and sensor readouts creates a fully rounded dataset.

It is also crucial to ensure the dataset includes diverse scenarios and edge cases so the robot knows how to react when things go wrong. Maintaining strict annotation quality standards and continuously validating and iterating on the dataset ensures long-term reliability.

Why Businesses are Outsourcing Robotics Data Collection

Many robotics companies are choosing to outsource their data collection processes. Managing large-scale data gathering creates massive scalability challenges for internal teams. Specialized data collection also requires distinct domain expertise that many software developers lack.

Outsourcing offers a faster turnaround time and greater cost efficiency. Macgence stands out as a leading provider of custom Fine-grained Cooking Manipulation Data. By delivering high-quality manipulation trajectory data and end-to-end robotics dataset solutions, Macgence allows robotics companies to focus on building models rather than gathering raw information.

Future Trends in Manipulation Data for Robotics

The demand for complex robotics data will continue to evolve. We are seeing a steady rise in multimodal robotics datasets that combine vision, language, and action. Integration with Vision-Language-Action (VLA) models is allowing robots to understand verbal commands and translate them into precise physical movements.

The industry is also increasing its use of egocentric data pipelines and exploring synthetic and real-world hybrid datasets to speed up training. Ultimately, this data-driven approach will pave the way for highly capable, general-purpose humanoid robots.

Empowering the Next Generation of Precision Machines

Precision robotics depends entirely on the availability of fine-grained data. Complex tasks require machines to understand the subtle nuances of force, trajectory, and timing. Specialized datasets, particularly cooking datasets, provide a powerful training foundation that translates to exceptional real-world performance. High-quality manipulation trajectory data ensures robots move safely and efficiently through human environments. Partner with Macgence to secure the advanced robotics datasets your organization needs to build the intelligent machines of tomorrow.

FAQs

Ans: – It is a highly detailed dataset that records the precise physical movements, forces, and interactions involved in preparing food. It is used to teach robots complex, sequential tasks.

Ans: – It provides the exact spatial and temporal path a robotic arm must take to complete a task, enabling smooth motion planning and accurate imitation learning.

Ans: – It is gathered using a combination of high-resolution cameras, motion capture technology, and tactile sensors to record every aspect of a physical interaction.

Ans: – Key challenges include the high cost of collection, the difficulty of frame-by-frame annotation, handling visual occlusions, and synchronizing multiple sensor feeds.

Ans: – It reduces the sim-to-real gap, improves grasping capabilities, and allows robots to generalize their learned skills to new, unseen tasks.

Ans: – Major beneficiaries include healthcare (surgical robots), manufacturing (precision assembly), household consumer goods (cleaning and cooking robots), and hospitality.

Ans: – Yes. Companies often partner with specialized data providers like Macgence to quickly and cost-effectively obtain high-quality, fully annotated robotics datasets.

You Might Like

April 27, 2026

Powering Robotics AI With Activity Recognition

Robotics automation is undergoing a massive transformation. We are moving away from simple, rule-based machines and entering an era of AI-driven perception. Robots no longer just perform repetitive tasks; they observe, interpret, and react to human behavior in real time. Understanding human activities is especially critical in complex physical spaces like stores and factories. This […]

April 25, 2026

Building a High-Quality Robot Perception Dataset

Robot perception serves as the backbone of embodied AI. Without the ability to accurately see, hear, and feel their surroundings, machines cannot interact safely with the physical environment. A robot perception dataset provides the essential sensory inputs—like vision, depth, and tactile feedback—that train these systems to understand the world around them. When developers rely on […]

April 24, 2026

Advanced Robotics Data Types: From Trajectories to 3D Hand Meshes

The field of artificial intelligence is experiencing a massive shift. We are moving away from simple labeled datasets toward complex, multimodal robotics data. Early AI models relied heavily on static images and text, but embodied AI and modern robot learning require something much more robust. To interact with the physical world, robots need high-fidelity data […]

Previous Blog

Previous Blog