- What Are Multimodal Robotics Datasets?

- Why Single-Modal Data Falls Short

- The Role of Multimodal AI Data Enrichment

- Key Components of High-Quality Multimodal Robotics Datasets

- Use Cases Driving Adoption

- Challenges in Building Multimodal Robotics Datasets

- Best Practices for Creating Multimodal Robotics Datasets

- The Future of Robot Perception

- Securing a Competitive Advantage in Robotics

- FAQs

Multimodal Robotics Datasets: The Future of Perception

Robots are moving beyond single-sensor intelligence to achieve human-like perception. For years, traditional robotic systems relied heavily on vision-only data. While cameras are incredibly useful, they only capture a fraction of the physical environment. This limitation often prevents robots from fully understanding and interacting with complex surroundings.

Multimodal robotics datasets represent the next major evolution in AI. By combining different types of sensory inputs, these datasets give robots a more complete picture of their environment. This comprehensive understanding is essential for real-world deployment, where machines must exhibit robustness and adaptability to function safely alongside humans.

This leap in perception technology matters across numerous sectors. Manufacturing plants, healthcare facilities, logistics centers, and autonomous vehicle companies are already testing and deploying these advanced systems. By training on richer data, robots can finally perform intricate tasks with unprecedented precision and reliability.

What Are Multimodal Robotics Datasets?

At their core, multimodal robotics datasets are collections of training data that combine multiple sensor modalities into a single, cohesive package. Instead of relying solely on one type of input, these datasets fuse several data streams to give AI models a holistic view of an action or environment.

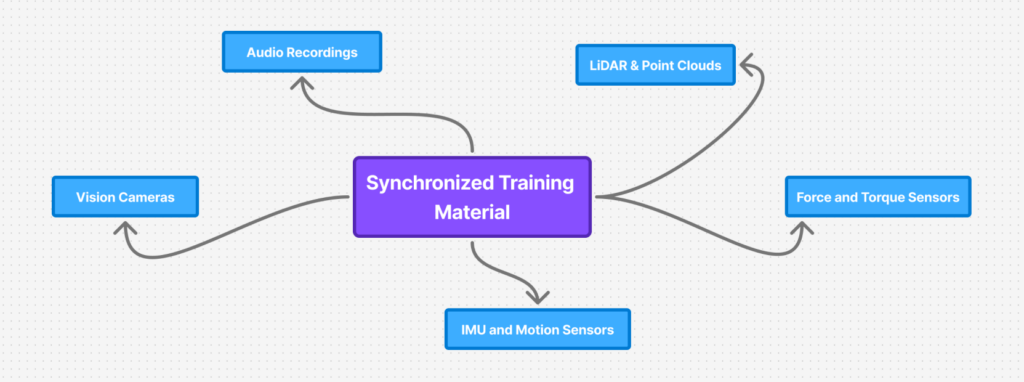

Common modalities found in these datasets include:

- Vision (RGB cameras and depth sensors)

- LiDAR and point clouds for 3D spatial mapping

- Audio recordings

- IMU (Inertial Measurement Units) and motion sensors

- Force and torque sensors for tactile feedback

A key feature of these datasets is the inclusion of multi-sensor robot trajectories. These trajectories consist of synchronized streams of perception and action data over time. For example, when a robot grasps an object, the dataset captures the visual data of the object, the tactile force applied by the robotic gripper, and the motion trajectory data of the arm moving through space. This synchronized information helps the AI understand exactly how an action correlates with sensory feedback.

Why Single-Modal Data Falls Short

Vision-only datasets have driven significant progress in computer vision, but they frequently fail when applied to complex physical tasks. A camera cannot tell a robot how heavy an object is or how much grip force is required to hold it without crushing it.

Furthermore, single-modal systems struggle in unpredictable real-world environments. Poor performance in low-light conditions, heavy shadows, or visual occlusions can render a vision-only robot entirely blind. Single-sensor data also suffers from sparsity and ambiguity problems, leaving AI models guessing when they encounter unfamiliar situations. To overcome these limitations, developers are turning to multimodal AI data enrichment.

The Role of Multimodal AI Data Enrichment

Multimodal AI data enrichment is the process of enhancing and annotating data from various sensors to create high-quality, synchronized training material. This process transforms raw data streams into usable knowledge for machine learning models.

Enrichment vastly improves dataset quality through accurate sensor fusion alignment and strict temporal synchronization. It ensures that a video frame matches the exact millisecond of a force sensor reading. Furthermore, contextual labeling across modalities allows annotators to mark specific events comprehensively. For example, a “pick action” is labeled simultaneously across the video feed, the physical trajectory, and the force data.

The benefits of this enriched data are substantial. Models trained on enriched datasets show better generalization to new tasks, reduced model bias, and significantly improved robustness when deployed in the real world.

Key Components of High-Quality Multimodal Robotics Datasets

Building an effective dataset requires careful attention to detail across several critical components.

Sensor Fusion & Calibration

Accurate alignment between different modalities is non-negotiable. Without precise spatial and temporal consistency, the AI model will receive conflicting information. Proper calibration ensures that the depth data from a LiDAR scan perfectly matches the pixels from an RGB camera.

Multi-Sensor Robot Trajectories

Capturing sequential decision-making is vital for teaching robots how to move and react. Multi-sensor robot trajectories are incredibly important for imitation learning and reinforcement learning, as they show the AI exactly how a successful sequence of actions unfolds over time.

Annotation Across Modalities

Labeling data across multiple sensors presents unique cross-modal challenges. A label applied to a 2D image must also make sense within a 3D point cloud or an audio waveform. This requires specialized annotation workflows and highly trained data labeling teams.

Data Diversity & Edge Cases

Real-world variability is a major hurdle for robotics. High-quality datasets must include diverse lighting conditions, varying terrain, and multiple object types. Importantly, they must also include failure cases, teaching the AI what not to do when things go wrong.

Use Cases Driving Adoption

Several industries are actively driving the demand for complex robotics datasets.

Autonomous Robots

Self-driving vehicles and autonomous drones rely heavily on navigation systems powered by a combination of LiDAR, vision, and IMU data. This fusion allows them to navigate safely through dynamic environments.

Industrial Robotics

Modern manufacturing requires precision. Robotic arms use vision alongside force sensors to handle delicate components, assemble small parts, and perform quality control inspections with high accuracy.

Humanoid Robots

Companies developing humanoid robots rely on multi-sensor robot trajectories to teach machines how to walk, balance, and interact with human tools safely.

Healthcare Robotics

Surgical robots combine high-definition imaging with tactile feedback, allowing surgeons to perform minimally invasive procedures with the same physical sensation as traditional surgery.

Delivery & Service Robots

Robots delivering packages or cleaning hospital floors must navigate busy, dynamic environments. Multimodal perception allows them to detect obstacles, hear approaching hazards, and map optimal routes.

Challenges in Building Multimodal Robotics Datasets

Despite the clear advantages, creating these datasets is incredibly difficult. Data collection complexity is high because it requires managing multiple hardware devices simultaneously. Hardware synchronization issues frequently arise, as different sensors capture data at different frame rates.

Annotation costs are also significantly higher than traditional datasets due to the specialized skills required. Additionally, the sheer volume of data generated by multiple sensors creates massive storage and processing requirements. There are also notable standardization gaps in the industry, making it hard to share data between different platforms. Finally, privacy and security concerns remain a hurdle, especially when recording video and audio in public spaces.

Best Practices for Creating Multimodal Robotics Datasets

To overcome these challenges, organizations should adhere to proven best practices. Teams must use standardized data formats to ensure compatibility across different machine learning frameworks. Precise sensor calibration should be completed before every data collection session.

Companies should invest in scalable annotation pipelines that can handle complex, cross-modal labeling tasks. Focusing on real-world data collection rather than purely simulated data will yield much better results during physical deployment. It is also important to incorporate continuous data validation to catch errors early. Partnering with specialized data providers can help streamline this massive undertaking and ensure the highest quality outputs.

The Future of Robot Perception

The robotics industry is currently experiencing a massive shift toward foundation models. These large-scale AI models thrive on vast amounts of diverse data. Cross-embodiment learning, enabled by multimodal data, will soon allow an AI trained on a robotic arm to transfer its knowledge to a completely different humanoid robot.

We will also see a rise in hybrid datasets that seamlessly blend simulated environments with real-world data collection. The role of multimodal robotics datasets will only become more critical as developers push toward AGI-level robotics. Consequently, the demand for high-quality, enriched datasets will continue to surge.

Securing a Competitive Advantage in Robotics

The transition from single-sensor systems to multimodal perception is well underway. Multimodal robotics datasets provide the essential foundation for building intelligent, adaptable, and robust machines. Companies that invest early in high-quality data collection and multimodal AI data enrichment will position themselves at the forefront of the robotics revolution.

The future of robotics isn’t just smarter models. The future is richer, more connected data.

FAQs

Ans: – They are training datasets that combine data from multiple sensor types, such as cameras, LiDAR, audio, and force sensors, to give AI models a complete understanding of an environment.

Ans: – They allow robots to understand their physical surroundings accurately. This makes robots safer, more adaptable, and capable of operating in unpredictable real-world environments.

Ans: – These are synchronized recordings of a robot’s perception and action data over a specific period. They help teach AI models how sensory inputs relate to physical movements.

Ans: – It is the process of aligning, synchronizing, and labeling data across multiple sensor streams to create high-quality training material for machine learning models.

Ans: – Key industries include manufacturing, autonomous vehicles, healthcare, logistics, and service robotics.

Ans: – Main challenges include difficult hardware synchronization, high annotation costs, massive storage requirements, and complex data collection processes.

Ans: – By providing overlapping sensory information, they eliminate the blind spots and ambiguities associated with relying on a single sensor, like a camera.

Ans: – Yes. As the industry moves toward advanced foundation models and generalized robotics, rich, multi-sensor data is absolutely required to train capable systems.

You Might Like

April 13, 2026

Building Better Humanoids: The Power of Custom Multimodal Robotics Datasets

Humanoid robots are rapidly moving out of research labs and into real-world applications. We are seeing these complex machines take on roles in logistics, healthcare, retail, and home assistance. However, creating a robot that can safely and effectively navigate human spaces is an immense challenge. Humanoids require a highly contextual, multimodal understanding of their surroundings […]

April 13, 2026

How Scene Understanding Data Powers Autonomous Driving

Autonomous vehicles and robots are no longer just experimental concepts. They are actively entering real-world environments. However, a major challenge remains for engineers. Machines must accurately interpret complex, dynamic scenes in real time. This is where Autonomous Driving Scene Understanding becomes a critical capability. It allows machines to comprehend their surroundings rather than just passively […]

April 11, 2026

From Smart Homes to Warehouses: Data Use Cases in Robotics

Robotics technology is rapidly expanding across a wide variety of environments. We now see intelligent machines operating seamlessly in homes, warehouses, retail spaces, and corporate offices. This widespread adoption relies heavily on one crucial element: high-quality data. Data serves as the foundation of real-world robot intelligence. However, a single, universal dataset cannot train a robot […]

Previous Blog

Previous Blog