- What is Egocentric Data Annotation in AI?

- Why Egocentric Data is Critical for AI

- Key Use Cases of Egocentric Data Annotation

- Types of Egocentric Data Annotation

- Challenges in Egocentric Data Annotation

- Best Practices for Egocentric Data Annotation

- Tools & Technologies Used

- How Macgence Helps with Egocentric Data Annotation

- Future of Egocentric AI & Annotation

- Preparing for Next-Generation AI Models

- FAQs

What is Egocentric Data Annotation? Use Cases, Challenges & Best Practices

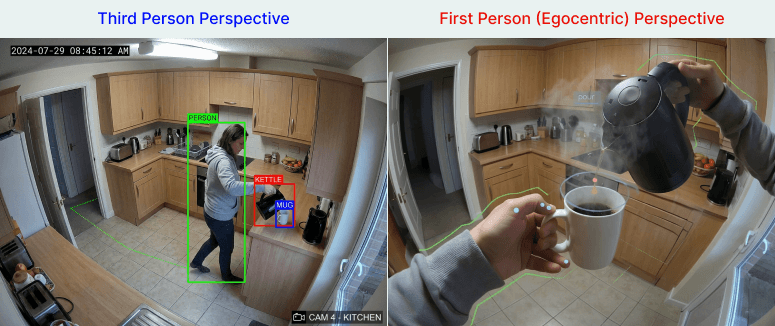

The rapid rise of augmented reality, virtual reality, and wearable artificial intelligence has fundamentally changed how machines observe the world. Historically, machine learning models relied on cameras mounted on walls or static tripods. These provided a distant, third-person view of human activity. As human-centric machine learning advances, developers recognize that this traditional viewpoint is no longer sufficient. Machines need to see exactly what we see.

This shift in perspective brings us to the concept of the “egocentric” view. An egocentric perspective captures data from the first-person point of view, mirroring human vision and auditory experiences. It requires specialized first-person data annotation to help machines understand context, human intent, and complex physical interactions.

Traditional data annotation struggles to capture the nuances of human gaze, sudden head movements, or hands manipulating objects up close. Egocentric data annotation has emerged as a specialized requirement to solve these exact problems. By labeling data from the user’s immediate viewpoint, developers can train more sophisticated algorithms.

Industries across the spectrum are already taking notice. Healthcare professionals use it for surgical training, robotics engineers use it to teach machines complex tasks, and retail giants use it to map consumer behavior. By mastering egocentric vision datasets, organizations can build the next generation of context-aware artificial intelligence.

What is Egocentric Data Annotation in AI?

Egocentric data annotation is the process of labeling and categorizing data captured from a first-person perspective. Instead of watching a scene unfold from a distance, the camera or sensor is mounted on the user, typically via smart glasses, body cameras, or virtual reality headsets.

The types of data collected in an egocentric setup go far beyond standard video frames. They include high-definition video feeds capturing the immediate field of view. They also involve complex audio recordings that blend ambient background noise with conversational speech. Furthermore, this data often incorporates sensor inputs like motion tracking, accelerometers, and advanced gaze tracking to pinpoint exactly where a user is looking.

To understand the difference between egocentric and third-person datasets, consider the simple act of making a cup of coffee. A CCTV camera in the corner of a kitchen records a person walking around, picking up a mug, and pouring liquid. The machine learning model sees a full-body shape interacting with distant objects. An egocentric camera, worn by the person making the coffee, records the exact angle of the hands gripping the mug, the precise moment the eyes check the coffee level, and the auditory cue of the liquid hitting the ceramic. Labeling this highly specific, immersive data is the core function of egocentric data annotation.

Why Egocentric Data is Critical for AI

The push for better wearable AI datasets is driven by the explosive growth of consumer and enterprise hardware. Devices like Meta smart glasses and the Apple Vision Pro require highly accurate algorithms to function effectively. These devices do not observe the user from afar; they share the user’s exact perspective.

Context-aware AI systems depend heavily on this first-person viewpoint. When a machine understands what a person is looking at and touching, it can anticipate needs and offer relevant digital overlays. This capability is essential for real-time human interaction modeling. If an AI assistant cannot accurately interpret the user’s immediate physical environment, it cannot provide useful, timely assistance.

The benefits of utilizing egocentric data are substantial. First, it offers significantly better contextual understanding. The AI learns to associate specific hand movements with specific objects and outcomes. Second, it leads to vastly improved human-AI interaction. Wearable devices can respond to subtle cues, such as a shift in gaze or a brief hesitation. Finally, it drives real-world decision-making accuracy. Autonomous systems and robotics trained on first-person data operate much more safely and efficiently when placed in unpredictable human environments.

Key Use Cases of Egocentric Data Annotation

AR/VR & Mixed Reality

Augmented and virtual reality rely heavily on understanding the user’s immediate environment. AR/VR data labeling allows these systems to perform accurate object recognition from the user’s point of view. It also enables precise gesture tracking, allowing users to interact with virtual menus or digital objects seamlessly using their hands.

Robotics & Human Assistance

Robots designed to work alongside humans need to understand how humans perform tasks. Engineers use egocentric vision datasets to train robots to mimic human actions safely. By analyzing first-person footage of humans assembling parts or cooking meals, robots engage in task learning from demonstrations, significantly speeding up their programming cycles.

Healthcare & Surgery

The medical field utilizes first-person data to enhance training and patient care. Surgeons wearing smart glasses record operations, creating invaluable surgical training datasets for medical students. The annotation of this footage highlights critical anatomical structures and surgical tool usage. Additionally, wearable cameras assist in continuous patient monitoring, allowing healthcare providers to assess daily living activities for rehabilitation purposes.

Retail & Consumer Behavior Analysis

Understanding how a customer navigates a store is highly valuable for retailers. First-person cameras and eye-tracking sensors provide deep insights into the shopper journey. Annotators track which products catch a customer’s eye, how long they read a label, and what items they ultimately place in their basket. This eye-tracking and decision analysis helps stores optimize their layouts and product placements.

Autonomous Systems

Egocentric data is crucial for the safety and efficiency of autonomous systems. Driver behavior analysis relies on cameras monitoring where a driver is looking and how their hands manipulate the steering wheel. Similarly, first-person navigation systems for drones or delivery bots use this data to navigate complex, unpredictable environments safely.

Types of Egocentric Data Annotation

Object Detection & Tracking

Identifying objects in motion from a point of view is a foundational task. Because the camera is constantly moving with the user, objects frequently shift in size, angle, and lighting. Annotators must accurately draw bounding boxes or polygons around objects, tracking them continuously across multiple erratic frames.

Action Recognition

Action recognition annotation involves labeling specific sequences of movement to define what the user is doing. Instead of just labeling a “cup,” the annotator labels the sequence as “picking up cup.” Other examples include “opening door,” “typing on keyboard,” or “pouring water.” This teaches the AI the mechanics of human behavior over time.

Gaze & Attention Annotation

Knowing where a user is looking is just as important as knowing what their hands are doing. Gaze and attention annotation uses eye-tracking data to label focal points. Annotators create heatmaps of focus areas, helping AI models understand which objects in a cluttered room hold the highest priority for the user at any given moment.

Scene Understanding

AI systems must understand the broader context of their environment. Scene understanding involves classifying the overall setting, such as distinguishing between indoor and outdoor locations. Annotators provide context tagging, labeling a sequence as taking place in a “busy kitchen,” a “quiet office,” or a “crowded street,” giving the AI a baseline understanding of expected environmental behaviors.

Audio Annotation

Egocentric devices capture complex audio landscapes. Audio annotation requires distinguishing human speech from ambient background noise. Annotators transcribe spoken words, perform speaker identification, and label critical environmental sounds like sirens, breaking glass, or a boiling kettle.

Challenges in Egocentric Data Annotation

Motion Blur & Instability

Because the camera is attached to a moving human body, the footage is rarely perfectly stable. Constant movement, sudden head turns, and walking create significant motion blur. This instability heavily affects frame clarity, making it incredibly difficult for annotators to accurately label small objects or rapid hand movements.

Occlusion Issues

In a first-person perspective, the user’s own body often gets in the way. Hands frequently block the very objects they are interacting with. Additionally, the camera’s limited field of view means that objects can easily slip in and out of the frame, causing occlusion issues that confuse standard tracking algorithms.

High Annotation Complexity

First-person data annotation is rarely a simple task of drawing one box per frame. It requires multi-layer labeling. An annotator might need to simultaneously label the object, the action being performed on the object, the user’s gaze direction, and the environmental context, making the process highly complex and time-consuming.

Privacy & Ethical Concerns

Wearable cameras naturally capture sensitive personal data. They record the faces of bystanders, computer screens displaying private information, and the interiors of private homes. Handling this data requires rigorous anonymization protocols, such as blurring faces and license plates, to ensure privacy and ethical compliance.

Scalability Issues

Continuous video data from wearable devices generates massive file sizes. Processing and labeling hundreds of hours of high-definition, multi-sensor data requires significant computational power and large teams of human annotators. Managing these scalability issues is a major hurdle for AI development teams.

Best Practices for Egocentric Data Annotation

To achieve high-quality results, teams must employ strict best practices. Use a combination of frame-by-frame and sequence-based annotation to capture both precise object locations and fluid actions over time.

Relying solely on humans or solely on software is inefficient. Combine human annotators with AI-assisted tools to speed up the process while maintaining accuracy. Pre-labeling software can draw initial bounding boxes, leaving human experts to refine complex interactions and handle edge cases.

Always define clear annotation guidelines before starting a project. Annotators need exact rules on how to handle severe motion blur or partial occlusion. Utilize multi-modal labeling frameworks that allow teams to sync video, audio, and sensor data perfectly. Ensure strict privacy compliance, adhering to regulations like GDPR or HIPAA if the datasets contain personal or medical information. Finally, implement continuous quality assurance loops to catch and correct labeling errors early.

Tools & Technologies Used

Professional annotation teams rely on robust platforms to handle complex wearable AI datasets. Annotation platforms like CVAT, Labelbox, and V7 offer the necessary infrastructure for managing large-scale video files and coordinating remote teams.

AI-assisted annotation tools are integrated into these platforms to accelerate the workflow. Computer vision models are used for pre-labeling, automatically detecting common objects and suggesting bounding boxes.

Specialized software is required for the more unique aspects of egocentric data. Gaze tracking software syncs eye-movement coordinates with video frames to generate accurate attention heatmaps. Sensor fusion tools are employed to align timestamps from video feeds, audio tracks, and motion accelerometers, ensuring the annotator sees a cohesive recreation of the user’s experience.

How Macgence Helps with Egocentric Data Annotation

Developing sophisticated AI requires data partners who understand the nuances of the first-person perspective. Macgence offers deep expertise in handling complex, multi-modal datasets. We provide scalable annotation teams trained specifically to navigate the challenges of motion blur, occlusion, and multi-layer labeling.

Our teams build custom workflows tailored to your specific industry requirements. Whether you are developing AR/VR applications, programming next-generation robotics, or building healthcare AI, we adapt our tools and processes to meet your exact specifications.

Macgence ensures accuracy through high-quality QA processes, utilizing multiple layers of review to guarantee precision in every frame. We prioritize secure data handling, maintaining strict compliance protocols to protect sensitive personal information and ensure your datasets meet all regulatory standards.

Future of Egocentric AI & Annotation

The demand for egocentric data annotation will only accelerate as spatial computing and wearable AI become mainstream. Devices that seamlessly blend digital elements with the physical world require flawless first-person understanding to function properly.

We will see a rapid rise in real-time annotation pipelines. As edge computing improves, AI models will increasingly process and annotate first-person data on the fly, reducing latency for the end user.

Integration with generative AI will allow developers to synthesize massive egocentric vision datasets, creating varied training scenarios without the need for manual physical recording. Furthermore, the integration of first-person data with digital twins will allow organizations to simulate human interactions within highly accurate virtual replicas of factories, hospitals, and retail spaces, driving an unprecedented demand for high-quality annotated first-person datasets.

Preparing for Next-Generation AI Models

Egocentric data annotation bridges the gap between how machines observe the world and how humans actually experience it. By capturing and labeling data from the first-person perspective, developers can train AI models to understand context, predict intent, and interact safely within complex environments.

As augmented reality, robotics, and autonomous systems continue to evolve, the reliance on high-quality wearable AI datasets will become an industry standard. Building these advanced systems requires meticulous attention to detail, rigorous privacy standards, and specialized annotation workflows.

Looking for high-quality egocentric data annotation? Partner with Macgence.

FAQs

Ans: – It is the process of labeling video, audio, and sensor data captured from a first-person point of view, typically using wearable cameras or smart glasses.

Ans: – Traditional datasets rely on stationary, third-person cameras observing a scene from a distance. Egocentric data is captured from the user’s exact perspective, moving as they move and seeing exactly what they see.

Ans: – Major industries include AR/VR development, robotics, healthcare (surgical training and patient monitoring), retail behavior analysis, and autonomous navigation systems.

Ans: – Key challenges include severe motion blur, occlusion (hands blocking objects), complex multi-layer labeling requirements, and strict privacy concerns regarding bystanders.

Ans: – Teams use platforms like CVAT, Labelbox, and V7, combined with AI-assisted pre-labeling models, gaze tracking software, and sensor fusion tools.

Ans: – It teaches AI to understand real-world context, recognize complex human actions, and improve human-AI interactions by interpreting the immediate physical environment from the user’s perspective.

Ans: – While AI tools can assist with pre-labeling and object tracking, the complexity of action recognition, occlusion, and context still requires significant human oversight and expertise for high accuracy.

You Might Like

March 18, 2026

When Is It Time to Switch Your AI Data Vendor?

Building a high-performing artificial intelligence model requires massive amounts of accurate, high-quality data. A reliable AI data vendor is the backbone of this process, ensuring your algorithms learn from the best possible inputs. However, partnering with the wrong vendor can bring your AI projects to a grinding halt. Poor data quality leads to increased costs, […]

March 13, 2026

Outsourcing Data Annotation: How to Choose the Right Partner

High-quality labeled data is the backbone of any successful AI model. Without it, even the most sophisticated algorithms fall flat. As AI adoption accelerates across industries, the demand for accurately annotated datasets has never been higher—and building an in-house team capable of meeting that demand is expensive, slow, and operationally complex. That’s where outsourcing data […]

March 12, 2026

AI Data Quality Metrics That Actually Matter

Every machine learning model is only as good as the data it learns from. That’s not a controversial opinion—it’s a well-established reality that AI teams run into constantly. You can have a sophisticated model architecture, ample compute power, and a talented engineering team, but if your training data is noisy, incomplete, or inconsistently labeled, your […]