- Why Scaling AI Training Data Is Challenging

- What Does “Scalable AI Data Annotation” Really Mean?

- Proven Strategies to Scale AI Training Data Without Losing Quality

- Common Mistakes to Avoid While Scaling Training Data

- How to Measure Quality While Scaling

- Why Partnering with the Right Data Annotation Provider Matters

- Building Data Pipelines for the Future

How to Scale AI Training Data Without Losing Quality

Many AI models fail to reach their full potential not because of flawed algorithms, but due to poor data quality at scale. When enterprises move from pilot projects to full-scale production, they face a difficult dilemma: how to increase the volume of training data quickly without letting error rates climb.

For organizations deploying AI, bad data leads to biased models, poor decision-making, and wasted resources. Pushing massive amounts of unverified information through your pipeline will actively harm your system’s performance. The solution lies in achieving scalable AI data annotation. This approach allows teams to process massive datasets rapidly while maintaining the strict accuracy required for enterprise-grade machine learning.

Why Scaling AI Training Data Is Challenging

Scaling training data requires much more than simply hiring additional labelers. As data volume increases, maintaining label consistency and reliability becomes highly complex.

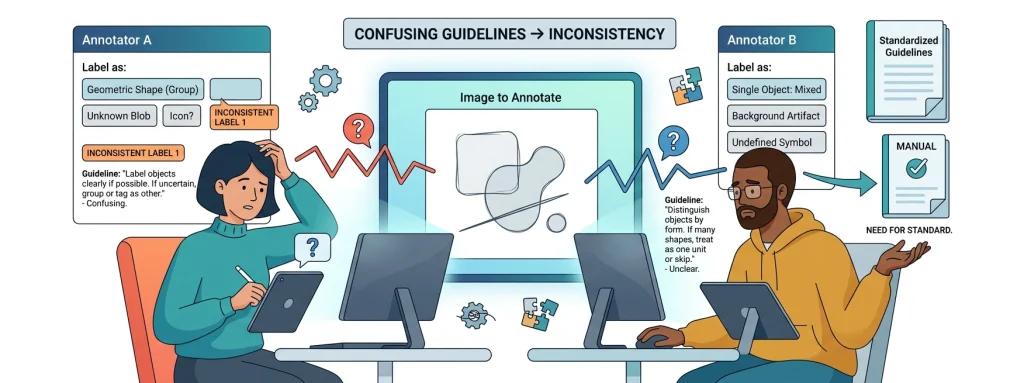

Data quality naturally tends to degrade as operations expand. Annotation bottlenecks occur when complex edge cases require deep human review, slowing down the entire pipeline. Furthermore, workforce inconsistency among human annotators leads to subjective labeling. Two annotators might view the same image or text and label it differently if guidelines are not perfectly clear.

The challenge grows steeper when dealing with multilingual and domain-specific complexity. Processing large AI datasets for medical, legal, or financial models requires deep subject matter expertise, not just basic language skills. Without standardized workflows, handling this volume securely and accurately quickly turns into an operational nightmare.

What Does “Scalable AI Data Annotation” Really Mean?

Scalable AI data annotation is the process of expanding your data labeling operations seamlessly to handle massive volumes without experiencing a drop in accuracy. It relies on building a system that can grow on demand while enforcing strict quality controls.

The core pillars of this approach include:

- Accuracy at scale: Ensuring the one-millionth label is just as precise as the first.

- Speed without compromise: Meeting aggressive project deadlines while maintaining high confidence scores.

- Process standardization: Creating uniform guidelines that leave no room for guesswork.

- Continuous quality monitoring: Catching and correcting errors in real time.

True scalability means your output is repeatable, measurable, and consistently high-quality.

Proven Strategies to Scale AI Training Data Without Losing Quality

Build a Robust Annotation Workflow

Your operation needs airtight Standard Operating Procedures (SOPs). Create highly detailed annotation guidelines that include clear examples of edge cases. Always maintain version control for your labeling rules so that when project requirements shift, every annotator instantly transitions to the updated standards.

Use a Hybrid Approach (Human + AI)

Relying entirely on manual labor is too slow, but relying purely on automation introduces errors. Human-in-the-loop systems offer the best of both worlds. You can use existing AI models for pre-labeling vast amounts of data, then direct your human annotators to verify the machine’s work and correct edge cases through active learning loops. This results in faster scaling with maintained accuracy.

Invest in Skilled & Domain-Specific Annotators

General crowdsourcing falls short when handling complex, large AI datasets. Domain expertise matters immensely in fields like healthcare, finance, and law. Ensure your workforce undergoes continuous training and certification. Implement rigorous performance tracking systems to identify which labelers need additional coaching.

Implement Multi-Level Quality Assurance

A single pass by one annotator is rarely enough. Implement two-layer or three-layer QA systems to review complex data points. Use consensus scoring, where multiple annotators label the same item and the system calculates agreement. Regularly test your workforce against gold standard datasets—pre-labeled data with known correct answers—to ensure ongoing accuracy.

Leverage Annotation Tools & Automation

Spreadsheets and basic tools will crush your productivity. Invest in advanced annotation platforms that feature auto-labeling and strict validation rules. Workflow automation can route tasks to the most qualified annotators based on their past performance, keeping the pipeline moving smoothly.

Scale Globally with Localization Support

If your AI operates globally, your data must reflect that. Scaling requires multilingual annotation capabilities and deep cultural context awareness. A distributed, global workforce ensures your models understand regional nuances, idioms, and visual contexts that a localized team might miss.

Common Mistakes to Avoid While Scaling Training Data

Many organizations stumble by prioritizing speed over quality, rushing to hit volume targets while ignoring accuracy metrics. Poorly defined annotation guidelines lead directly to messy, unusable data.

Ignoring QA processes is another major pitfall. If you fail to double-check the work, errors compound rapidly. Likewise, relying on an untrained or ultra-low-cost workforce often results in having to relabel the entire dataset later. Finally, a lack of feedback loops means annotators never learn from their mistakes, guaranteeing those errors will be repeated.

How to Measure Quality While Scaling

What gets measured gets improved. To maintain high standards while scaling training data, you must track specific key metrics constantly.

Monitor your overall annotation accuracy rate to ensure it meets your baseline requirements. Track Inter-annotator agreement (IAA) to see how often different team members agree on the same label; a low IAA indicates your guidelines are confusing. Keep a close eye on individual error rates, and constantly evaluate your turnaround time versus quality balance to ensure speed isn’t degrading performance.

Why Partnering with the Right Data Annotation Provider Matters

Building an internal data pipeline is expensive and time-consuming. Outsourcing to experts provides immediate access to a pre-trained workforce and scalable infrastructure. This approach guarantees faster turnaround times and leverages built-in QA systems that have been refined over thousands of projects.

When looking for a provider, seek out teams with proven experience handling large AI datasets. They must possess strong QA frameworks, deep domain expertise, and rigorous data security compliance.

Macgence stands out as a trusted partner for scalable AI data annotation. By combining an expert human workforce with advanced technology workflows, Macgence ensures your training data is accurate, secure, and delivered on time, empowering your AI models to perform at their absolute best.

Building Data Pipelines for the Future

Scaling training data absolutely does not mean you have to sacrifice quality. By implementing the right strategies, you can expand your operations rapidly and securely.

Success requires a careful balance of standardized processes, highly trained people, and smart technology. The future of enterprise AI depends entirely on high-quality, scalable data pipelines. Ensure your foundation is rock solid before you scale.

FAQs

Ans: – Scalable AI data annotation is the ability to rapidly increase the volume of data being labeled for machine learning models without experiencing any decrease in data quality or accuracy.

Ans: – Quality is maintained by creating strict annotation guidelines, utilizing multi-level Quality Assurance (QA) processes, implementing consensus scoring, and using human-in-the-loop hybrid approaches.

Ans: – The main challenges include maintaining consistent label quality, preventing bottlenecks in the workflow, managing a large human workforce, and handling complex domain-specific or multilingual data.

Ans: – Human-in-the-loop combines the speed of automated AI pre-labeling with the critical thinking and accuracy of human reviewers. This hybrid method ensures nuanced edge cases are handled correctly while overall volume increases.

Ans: – Common metrics include the overall annotation accuracy rate, Inter-Annotator Agreement (IAA), individual labeler error rates, and testing against gold standard datasets.

Ans: – Yes, outsourcing to a specialized provider offers immediate access to trained professionals, scalable infrastructure, and established QA processes, saving businesses significant time and operational costs.

You Might Like

May 23, 2026

How Egocentric Gesture Recognition Labeling Improves Human-Robot Interaction

Embodied AI and first-person perception systems are reshaping how machines understand human behavior. As wearable cameras and point-of-view (POV) devices become more advanced, they generate massive amounts of egocentric video data. This unique perspective allows AI models to see the world exactly as a human user does. To make sense of this data, developers rely […]

May 22, 2026

Training Embodied AI with First-Person Video for Robotics

Embodied artificial intelligence marks a massive shift in how machines interact with their environments. Traditional robots follow rigid, pre-programmed instructions to perform repetitive tasks. Modern AI systems, however, need contextual visual perception to navigate unstructured spaces safely and effectively. To achieve this level of autonomy, engineers rely heavily on first-person video for robotics. This approach […]

May 21, 2026

The secret to smarter robots: Why Humanoid Robot Manipulation Data matters

Advancements in embodied AI and humanoid robotics are rapidly changing how machines interact with the physical world. While early robots were largely confined to rigid, pre-programmed tasks, modern machines require genuine manipulation intelligence to safely navigate and engage with complex, human-centric environments. Without this intelligence, a robot cannot properly grasp objects or assist humans in […]

Previous Blog

Previous Blog