- What is Edge Case Data in Robotics AI?

- Why Edge Cases Matter More Than You Think

- The 35% Performance Improvement Explained

- Types of Edge Case Data That Matter in Robotics

- How to Collect Edge Case Data Effectively

- Annotation Challenges and Best Practices

- Integrating Edge Case Data into Training Pipelines

- The Future of Edge Case-Driven Robotics AI

- Securing Real-World Reliability

- FAQs

How Edge Case Data Boosted Robotics AI Performance by 35%

Robotics AI failures rarely happen under normal, predictable conditions. Instead, they occur in rare, unpredictable scenarios that standard testing environments simply fail to replicate. A warehouse robot might flawlessly navigate clear aisles but completely misidentify a heavily shadowed pallet in a poorly lit corner.

This is where edge case data for robotics AI becomes essential. By actively targeting the extreme anomalies that confuse machine learning models, engineers can drastically improve reliability. In fact, addressing these specific outliers improved model performance by 35% in one recent deployment.

High-quality edge case data is the missing piece in scaling robust, real-world robotics systems. Let’s look at exactly what these data points are, why they matter, and how they drive massive performance gains.

What is Edge Case Data in Robotics AI?

Edge case data refers to rare, unusual, or unexpected scenarios that are not well represented in standard training datasets. While a conventional robot perception dataset might include thousands of images of perfect cardboard boxes in bright lighting, it often lacks examples of the messy reality of physical environments.

Common examples in robotics include:

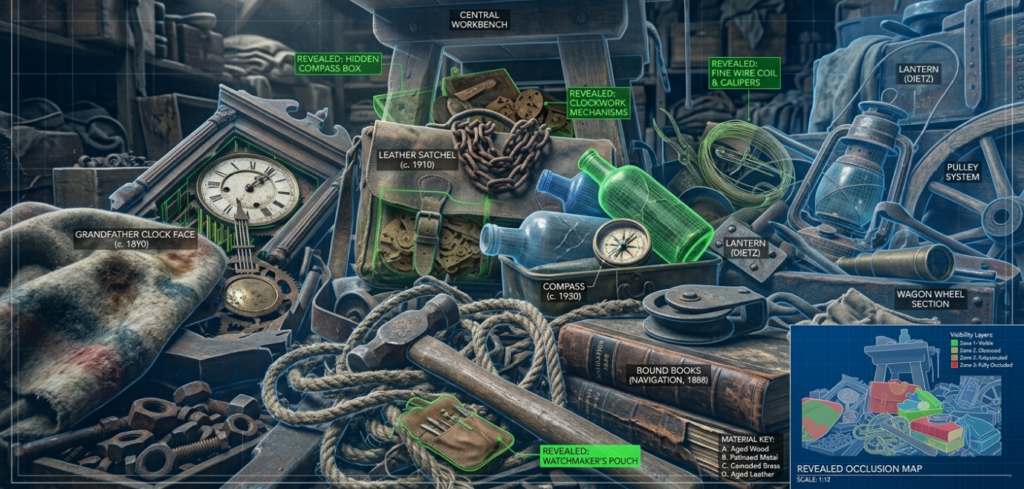

- Occluded objects: Items partially hidden behind other structures in crowded warehouses.

- Unusual lighting conditions: Extreme glare, sudden shadows, or flickering warehouse lights.

- Damaged or irregular objects: Crushed boxes, torn labels, or uniquely shaped inventory.

- Human-robot interaction anomalies: Workers stepping unexpectedly into a machine’s path.

Traditional datasets fail to capture real-world variability because they prioritize volume over diversity. An edge-enriched robot perception dataset deliberately seeks out these bizarre, infrequent scenarios to teach the AI how to handle the unexpected.

Why Edge Cases Matter More Than You Think

Robotics systems operate in highly dynamic, unpredictable environments. Engineers constantly fight the long-tail problem in AI: the reality that the vast majority of system failures stem from a tiny percentage of rare scenarios.

Ignoring these edge cases has severe consequences. Reduced model accuracy in production quickly leads to increased downtime and high error rates. More importantly, it creates massive safety risks, especially in industrial robotics where a single miscalculation can cause physical harm or property damage.

Vision models and perception systems struggle the most with unseen conditions. A camera that only knows perfect lighting will fail completely when a forklift casts a dark shadow. Improving edge case coverage provides disproportionate performance gains, turning fragile algorithms into resilient, production-ready tools.

The 35% Performance Improvement Explained

To understand the impact of outlier training, look at a recent deployment of an autonomous sorting robot. Initially, the robot’s vision model was trained on a massive, standard robot perception dataset. It achieved incredibly high accuracy in lab testing, but real-world performance plummeted once deployed on the factory floor.

The key challenges became obvious quickly. The system failed repeatedly in cluttered environments. It misclassified objects whenever the factory’s lighting shifted or when items were partially occluded by overlapping conveyor belts.

The solution was a highly targeted collection of edge case data for robotics AI. The engineering team gathered new data focusing entirely on rare object orientations, extreme lighting variations, and motion blur scenarios. They applied targeted annotation, heavily utilizing precise bounding boxes, semantic segmentation, and depth labeling for these specific anomalies.

The outcome was transformative. The system saw a 35% improvement in overall detection accuracy. False positives and negatives dropped significantly, and the robot finally demonstrated the real-world adaptability required for continuous industrial use.

Types of Edge Case Data That Matter in Robotics

To build a truly robust robot perception dataset, engineers must focus on several distinct categories of edge cases:

Environmental Edge Cases

These involve shifts in the surrounding world. For indoor robots, this means sudden lighting changes, reflections, or deep shadows. For outdoor systems, it includes weather conditions like heavy rain, fog, or blinding sunlight.

Object-Level Edge Cases

Objects are rarely perfect. A strong perception system needs to recognize deformed, damaged, or partially visible objects. A crushed box or a label printed upside-down shouldn’t completely break the sorting algorithm.

Sensor-Level Edge Cases

Hardware isn’t perfect, either. Models must be trained to handle noisy sensor data, dead camera pixels, or sudden depth inconsistencies caused by reflective surfaces confusing LiDAR arrays.

Behavioral Edge Cases

Environments with humans are inherently unpredictable. Training data must include unexpected human movement, sudden obstacles, or unusual interactions that a robot might encounter while navigating a shared workspace.

How to Collect Edge Case Data Effectively

Gathering rare data is notoriously difficult. Effective real-world data collection strategies often rely on a hybrid approach, combining field data capture in varied physical environments with advanced digital simulation.

Data sourcing methods include creating controlled scenarios where engineers deliberately recreate weird lighting or damaged goods. Crowdsourced data collection can also bring in diverse environmental images, while continuous feedback loops from deployed robots help flag and record production failures.

This is where specialized data partners like Macgence become invaluable. They build scalable data collection pipelines and offer domain-specific dataset curation. Finding the right balance between massive data quantity and highly specific data quality is much easier with an experienced data partner managing the pipeline.

Annotation Challenges and Best Practices

Edge cases are inherently harder to annotate than standard data. Complex scenes featuring heavy occlusion or object overlap create extreme ambiguity in labeling. If a box is 90% hidden by a forklift, annotators often struggle to determine exactly where to place the bounding box.

Best practices demand expert annotators who understand the specific requirements of robotics AI. Teams should use multi-layer annotation, combining bounding boxes, segmentation masks, and depth tagging on a single frame. Strict quality assurance workflows and validation protocols are mandatory. Clean, precise labels on messy, complex edge cases directly lead to better model generalization.

Integrating Edge Case Data into Training Pipelines

Once you have an edge-enriched robot perception dataset, you have to use it correctly. Standard data augmentation—digitally altering existing images—can help, but it rarely replaces the value of real edge data.

Engineers frequently use weighted training, forcing the algorithm to focus more computational attention on rare cases rather than the thousands of normal examples it already understands. Active learning loops are also critical. This creates a continuous improvement cycle: deploy the robot, collect the specific scenarios where it fails, retrain the model on those failures, and push the improved version back to production.

The Future of Edge Case-Driven Robotics AI

The next generation of robotics relies heavily on multimodal datasets that seamlessly blend vision, depth, and sensor fusion. As autonomous learning systems become more sophisticated, edge cases will define the competitive advantage in the robotics industry. Companies that invest early in high-quality data pipelines designed to capture and process these rare anomalies will consistently outperform those relying on basic, generalized datasets.

Securing Real-World Reliability

Edge cases are not strange exceptions you can ignore. They are the defining factors critical to your system’s overall performance. That 35% improvement in detection accuracy proves that training for the unexpected is the only way to build truly autonomous machines.

Investing in edge case data for robotics AI directly leads to more reliable, scalable systems that actually work outside the laboratory. To ensure your models are ready for the unpredictable real world, partner with data experts like Macgence to develop the robust datasets your robotics systems desperately need.

FAQs

Ans: – It refers to rare, unusual, or unexpected real-world scenarios—like extreme lighting or damaged objects—that are not well-represented in standard training data.

Ans: – It teaches AI models how to handle the unpredictable, dynamic environments they will actually face in production, reducing critical errors and safety risks.

Ans: – By specifically training the model on the exact outlier scenarios that previously caused it to fail, drastically reducing false positives and misclassifications in cluttered environments.

Ans: – A high-quality dataset balances volume with diversity, actively including challenging environmental, object-level, and sensor-level anomalies alongside standard examples.

Ans: – Companies can use a mix of controlled physical scenario creation, field data capture, simulation, and continuous feedback loops from robots currently deployed in the field.

Ans: – Yes. Synthetic data generated in simulation can help artificially create rare or dangerous scenarios that are too costly or difficult to capture in the real world.

Ans: – Manufacturing, warehousing, autonomous driving, and agriculture benefit immensely, as these industries feature highly dynamic environments with high safety and accuracy requirements.

You Might Like

April 16, 2026

Fast Track AI: Outsource Robotics Data Collection

The demand for faster robotics AI deployment is surging across industries like logistics, manufacturing, and autonomous systems. Companies are racing to build smarter, more capable robots. However, a major hurdle often slows down these ambitious timelines. Data collection is frequently the biggest bottleneck in robotics AI pipelines. Gathering the massive amounts of high-quality data required […]

April 15, 2026

How Quality Ground Truth Data Improves Robot Vision

Artificial intelligence is transforming how machines interact with their environments. Autonomous robots, warehouse logistics, smart manufacturing lines, and domestic assistants all rely heavily on advanced robot vision systems to function. These systems allow machines to “see” and interpret the world around them, making real-time decisions that drive productivity and efficiency. However, building a reliable robot […]

April 15, 2026

How to Scale Robotics Data Annotation Services for Warehouses

Warehouse automation is growing at an incredible rate. Facilities are adopting Amazon-style fulfillment models to keep up with consumer demand. Autonomous mobile robots (AMRs) and robotic picking arms now handle tasks that were previously entirely manual. These AI-driven machines rely heavily on high-quality annotated data to function properly. A robot cannot navigate an aisle or […]

Previous Blog

Previous Blog